Reflection from Layered Surfaces due to Subsurface Scattering

Pat Hanrahan; Wolfgang Krueger;

Princeton University; German National Research Center for Computer Science

Portals

Abstract

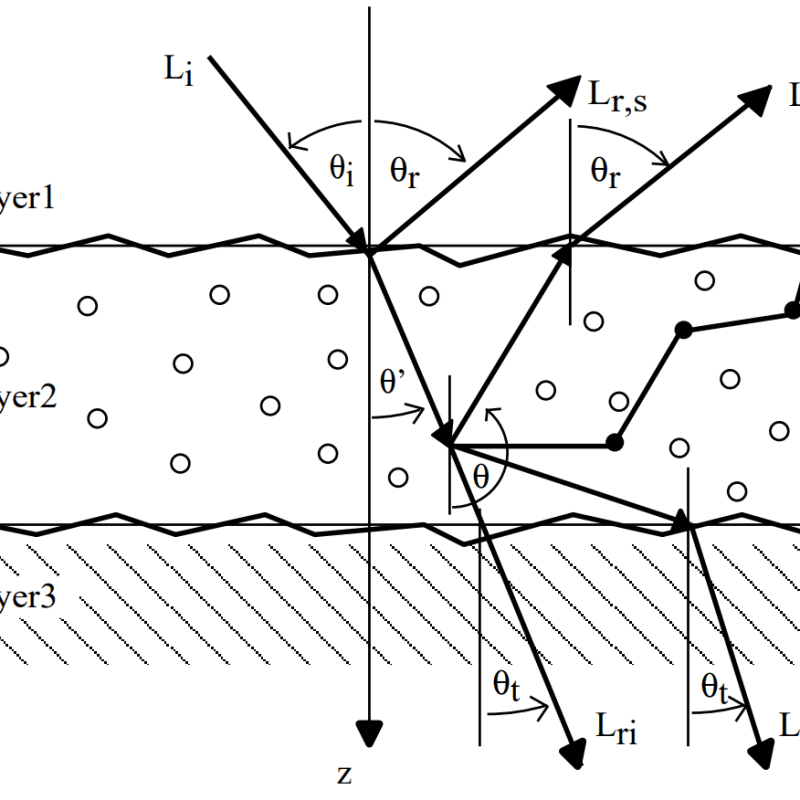

The reflection of light from most materials consists of two major terms: the specular and the diffuse. Specular reflection may be modeled from first principles by considering a rough surface consisting of perfect reflectors, or micro-facets. Diffuse reflection is generally considered to result from multiple scattering either from a rough surface or from within a layer near the surface. Accounting for diffuse reflection by Lambert’s Cosine Law, as is universally done in computer graphics, is not a physical theory based on first principles. This paper presents a model for subsurface scattering in layered surfaces in terms of one-dimensional linear transport theory. We derive explicit formulas for backscattering and transmission that can be directly incorporated in most rendering systems, and a general Monte Carlo method that is easily added to a ray tracer. This model is particularly appropriate for common layered materials appearing in nature, such as biological tissues (e.g. skin, leaves, etc.) or inorganic materials (e.g. snow, sand, paint, varnished or dusty surfaces). As an application of the model, we simulate the appearance of a face and a cluster of leaves from experimental data describing their layer properties.

Mip-NeRF 360: Unbounded Anti-Aliased Neural Radiance Fields

Jonathan T. Barron, Ben Mildenhall, Dor Verbin, Pratul P. Srinivasan, Peter Hedman

Google Research; Harvard University

Portals

Abstract

Though neural radiance fields (NeRF) have demonstrated impressive view synthesis results on objects and small bounded regions of space, they struggle on "unbounded" scenes, where the camera may point in any direction and content may exist at any distance. In this setting, existing NeRF-like models often produce blurry or low-resolution renderings (due to the unbalanced detail and scale of nearby and distant objects), are slow to train, and may exhibit artifacts due to the inherent ambiguity of the task of reconstructing a large scene from a small set of images. We present an extension of mip-NeRF (a NeRF variant that addresses sampling and aliasing) that uses a non-linear scene parameterization, online distillation, and a novel distortion-based regularizer to overcome the challenges presented by unbounded scenes. Our model, which we dub "mip-NeRF 360" as we target scenes in which the camera rotates 360 degrees around a point, reduces mean-squared error by 57% compared to mip-NeRF, and is able to produce realistic synthesized views and detailed depth maps for highly intricate, unbounded real-world scenes.

Comparisons

NeRF, mip-NeRF, NeRF++, Deep Blending, Point-Based Neural Rendering, Stable View Synthesis